Kubernetes集群插件怎么部署

导读:本文共26897.5字符,通常情况下阅读需要90分钟。同时您也可以点击右侧朗读,来听本文内容。按键盘←(左) →(右) 方向键可以翻页。

摘要: Kubernetes集群插件插件是Kubernetes集群的附件组件,丰富和完善了集群的功能,这里分别介绍的插件有coredns、Dashboard、Metrics Server,需要注意的是:kuberntes 自带插件的 manifests yaml 文件使用 gcr.io 的 docker registry,国内被墙,需要手动替换为其它registry 地... ...

目录

(为您整理了一些要点),点击可以直达。Kubernetes集群插件

插件是Kubernetes集群的附件组件,丰富和完善了集群的功能,这里分别介绍的插件有coredns、Dashboard、Metrics Server,需要注意的是:kuberntes 自带插件的 manifests yaml 文件使用 gcr.io 的 docker registry,国内被墙,需要手动替换为其它registry 地址或提前在FQ服务器上下载,然后再同步到对应的k8s部署机器上。

1 - Kubernetes集群插件 - coredns

可以从微软中国提供的 gcr.io免费代理下载被墙的镜像;下面部署命令均在k8s-master01节点上执行。

1)修改配置文件将下载的kubernetes-server-linux-amd64.tar.gz解压后,再解压其中的kubernetes-src.tar.gz文件。[root@k8s-master01~]#cd/opt/k8s/work/kubernetes[root@k8s-master01kubernetes]#tar-xzvfkubernetes-src.tar.gz解压之后,coredns目录是cluster/addons/dns。[root@k8s-master01kubernetes]#cd/opt/k8s/work/kubernetes/cluster/addons/dns/coredns[root@k8s-master01coredns]#cpcoredns.yaml.basecoredns.yaml[root@k8s-master01coredns]#source/opt/k8s/bin/environment.sh[root@k8s-master01coredns]#sed-i-e"s/__PILLAR__DNS__DOMAIN__/${CLUSTER_DNS_DOMAIN}/"-e"s/__PILLAR__DNS__SERVER__/${CLUSTER_DNS_SVC_IP}/"coredns.yaml2)创建coredns[root@k8s-master01coredns]#fgrep"image"./*./coredns.yaml:image:k8s.gcr.io/coredns:1.3.1./coredns.yaml:imagePullPolicy:IfNotPresent./coredns.yaml.base:image:k8s.gcr.io/coredns:1.3.1./coredns.yaml.base:imagePullPolicy:IfNotPresent./coredns.yaml.in:image:k8s.gcr.io/coredns:1.3.1./coredns.yaml.in:imagePullPolicy:IfNotPresent./coredns.yaml.sed:image:k8s.gcr.io/coredns:1.3.1./coredns.yaml.sed:imagePullPolicy:IfNotPresent提前FQ下载"k8s.gcr.io/coredns:1.3.1"镜像,然后上传到node节点上,执行"dockerload..."命令导入到node节点的images镜像里面或者从微软中国提供的gcr.io免费代理下载被墙的镜像,然后在修改yaml文件里更新coredns的镜像下载地址然后确保对应yaml文件里的镜像拉取策略为IfNotPresent,即本地有则使用本地镜像,不拉取接着再次进行coredns的创建[root@k8s-master01coredns]#kubectlcreate-fcoredns.yaml3)检查coredns功能(执行下面命令后,稍微等一会儿,确保READY状态都是可用的)[root@k8s-master01coredns]#kubectlgetall-nkube-systemNAMEREADYSTATUSRESTARTSAGEpod/coredns-5b969f4c88-pd5js1/1Running055sNAMETYPECLUSTER-IPEXTERNAL-IPPORT(S)AGEservice/kube-dnsClusterIP10.254.0.2<none>53/UDP,53/TCP,9153/TCP56sNAMEREADYUP-TO-DATEAVAILABLEAGEdeployment.apps/coredns1/11157sNAMEDESIREDCURRENTREADYAGEreplicaset.apps/coredns-5b969f4c8811156s查看创建的coredns的pod状态,确保没有报错[root@k8s-master01coredns]#kubectldescribepod/coredns-5b969f4c88-pd5js-nkube-system..........................Events:TypeReasonAgeFromMessage-------------------------NormalScheduled2m12sdefault-schedulerSuccessfullyassignedkube-system/coredns-5b969f4c88-pd5jstok8s-node03NormalPulled2m11skubelet,k8s-node03Containerimage"k8s.gcr.io/coredns:1.3.1"alreadypresentonmachineNormalCreated2m10skubelet,k8s-node03CreatedcontainercorednsNormalStarted2m10skubelet,k8s-node03Startedcontainercoredns4)新建一个Deployment[root@k8s-master01coredns]#cd/opt/k8s/work[root@k8s-master01work]#cat>my-nginx.yaml<<EOFapiVersion:extensions/v1beta1kind:Deploymentmetadata:name:my-nginxspec:replicas:2template:metadata:labels:run:my-nginxspec:containers:-name:my-nginximage:nginx:1.7.9ports:-containerPort:80EOF接着执行这个Deployment的创建[root@k8s-master01work]#kubectlcreate-fmy-nginx.yamlexport该Deployment,生成my-nginx服务:[root@k8s-master01work]#kubectlexposedeploymy-nginx[root@k8s-master01work]#kubectlgetservices--all-namespaces|grepmy-nginxdefaultmy-nginxClusterIP10.254.170.246<none>80/TCP19s创建另一个Pod,查看/etc/resolv.conf是否包含kubelet配置的--cluster-dns和--cluster-domain,是否能够将服务my-nginx解析到上面显示的ClusterIP10.254.170.246[root@k8s-master01work]#cd/opt/k8s/work[root@k8s-master01work]#cat>dnsutils-ds.yml<<EOFapiVersion:v1kind:Servicemetadata:name:dnsutils-dslabels:app:dnsutils-dsspec:type:NodePortselector:app:dnsutils-dsports:-name:httpport:80targetPort:80---apiVersion:extensions/v1beta1kind:DaemonSetmetadata:name:dnsutils-dslabels:addonmanager.kubernetes.io/mode:Reconcilespec:template:metadata:labels:app:dnsutils-dsspec:containers:-name:my-dnsutilsimage:tutum/dnsutils:latestcommand:-sleep-"3600"ports:-containerPort:80EOF接着创建这个pod[root@k8s-master01work]#kubectlcreate-fdnsutils-ds.yml查看上面创建的pod状态(需要等待一会儿,确保STATUS状态为"Running"。如果状态失败,可以执行"kubectldescribepod...."查看原因)[root@k8s-master01work]#kubectlgetpods-lapp=dnsutils-dsNAMEREADYSTATUSRESTARTSAGEdnsutils-ds-5sc4z1/1Running052sdnsutils-ds-h646r1/1Running052sdnsutils-ds-jx5kx1/1Running052s[root@k8s-master01work]#kubectlgetsvcNAMETYPECLUSTER-IPEXTERNAL-IPPORT(S)AGEdnsutils-dsNodePort10.254.185.211<none>80:32767/TCP7m14skubernetesClusterIP10.254.0.1<none>443/TCP7d13hmy-nginxClusterIP10.254.170.246<none>80/TCP9m11snginx-dsNodePort10.254.41.83<none>80:30876/TCP27h然后验证coredns功能。先依次登陆上面创建的dnsutils的pod里面进行验证,确保pod容器中/etc/resolv.conf里的nameserver地址为"CLUSTER_DNS_SVC_IP"变量值(即environment.sh脚本中定义的)[root@k8s-master01work]#kubectl-itexecdnsutils-ds-5sc4zbashroot@dnsutils-ds-5sc4z:/#cat/etc/resolv.confnameserver10.254.0.2searchdefault.svc.cluster.localsvc.cluster.localcluster.locallocaldomainoptionsndots:5[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupkubernetesServer:10.254.0.2Address:10.254.0.2#53Name:kubernetes.default.svc.cluster.localAddress:10.254.0.1[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupwww.baidu.comServer:10.254.0.2Address:10.254.0.2#53Non-authoritativeanswer:www.baidu.comcanonicalname=www.a.shifen.com.www.a.shifen.comcanonicalname=www.wshifen.com.Name:www.wshifen.comAddress:103.235.46.39发现可以将服务my-nginx解析到上面它对应的ClusterIP10.254.170.246[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupmy-nginxServer:10.254.0.2Address:10.254.0.2#53Non-authoritativeanswer:Name:my-nginx.default.svc.cluster.localAddress:10.254.170.246[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupkube-dns.kube-system.svc.clusterServer:10.254.0.2Address:10.254.0.2#53**servercan'tfindkube-dns.kube-system.svc.cluster:NXDOMAINcommandterminatedwithexitcode1[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupkube-dns.kube-system.svcServer:10.254.0.2Address:10.254.0.2#53Name:kube-dns.kube-system.svc.cluster.localAddress:10.254.0.2[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupkube-dns.kube-system.svc.cluster.localServer:10.254.0.2Address:10.254.0.2#53Name:kube-dns.kube-system.svc.cluster.localAddress:10.254.0.2[root@k8s-master01work]#kubectlexecdnsutils-ds-5sc4znslookupkube-dns.kube-system.svc.cluster.local.Server:10.254.0.2Address:10.254.0.2#53Name:kube-dns.kube-system.svc.cluster.localAddress:10.254.0.22 - Kubernetes集群插件 - dashboard

可以从微软中国提供的 gcr.io免费代理下载被墙的镜像;下面部署命令均在k8s-master01节点上执行。

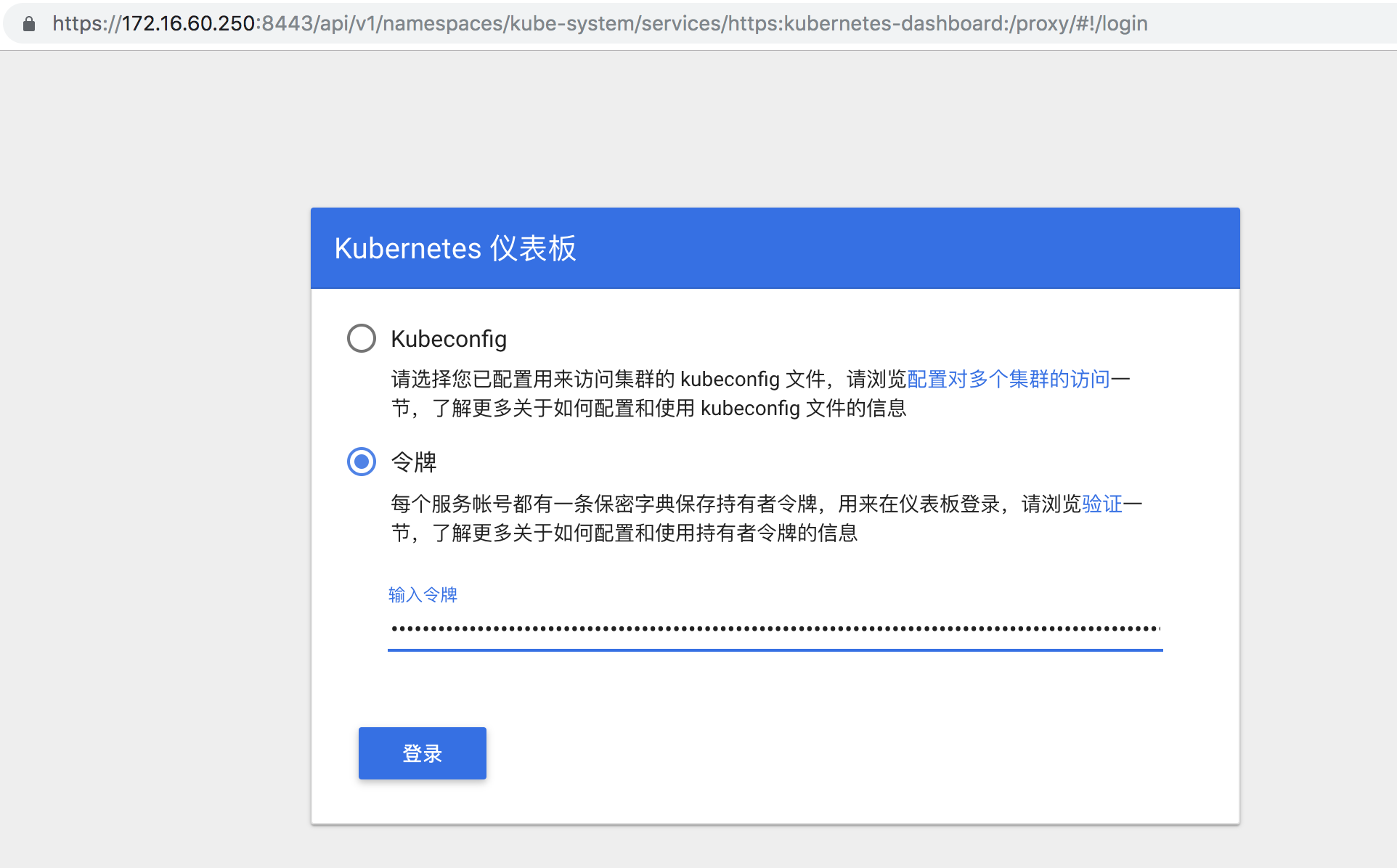

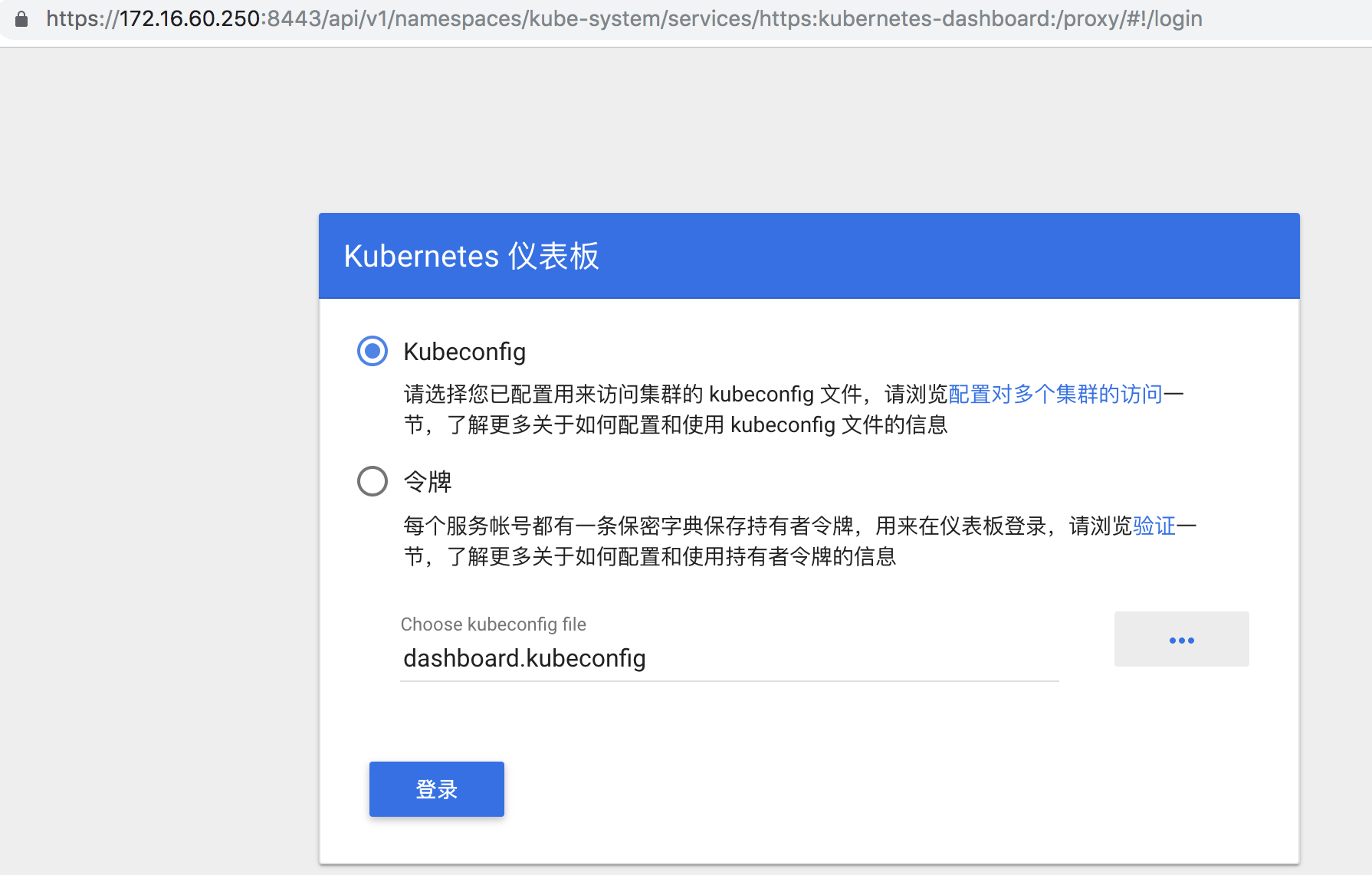

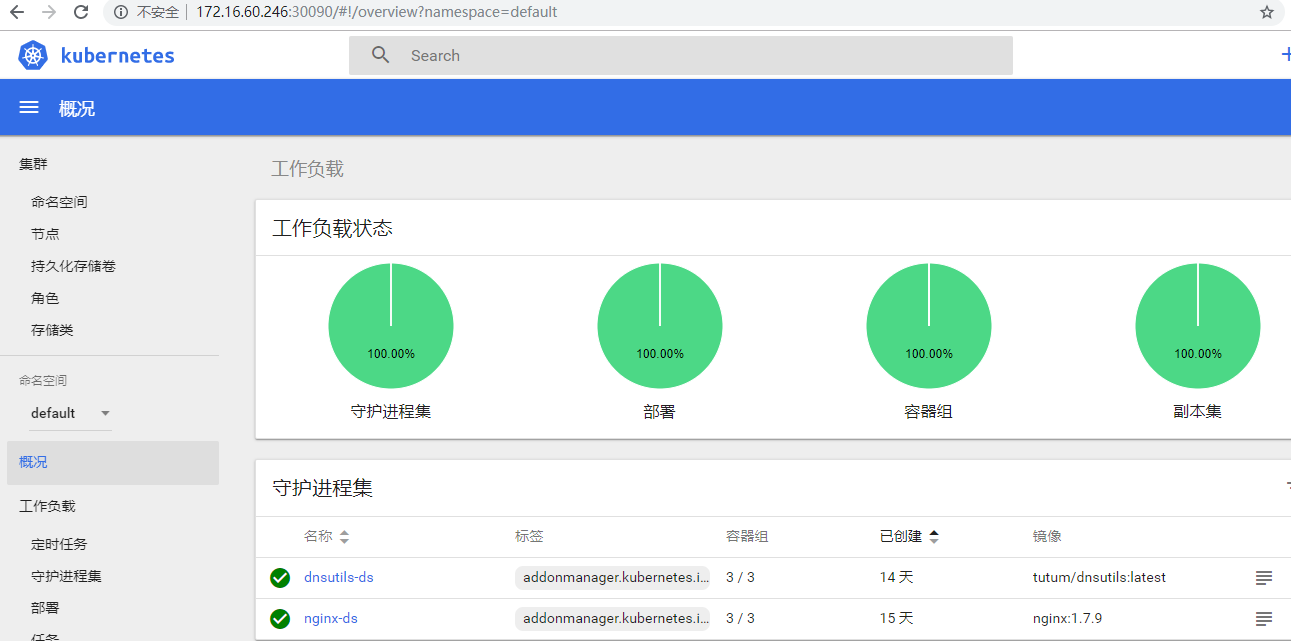

1)修改配置文件将下载的kubernetes-server-linux-amd64.tar.gz解压后,再解压其中的kubernetes-src.tar.gz文件(上面在coredns部署阶段已经解压过了)[root@k8s-master01~]#cd/opt/k8s/work/kubernetes/[root@k8s-master01kubernetes]#ls-dcluster/addons/dashboardcluster/addons/dashboarddashboard对应的目录是:cluster/addons/dashboard[root@k8s-master01kubernetes]#cd/opt/k8s/work/kubernetes/cluster/addons/dashboard修改service定义,指定端口类型为NodePort,这样外界可以通过地址NodeIP:NodePort访问dashboard;[root@k8s-master01dashboard]#vimdashboard-service.yamlapiVersion:v1kind:Servicemetadata:name:kubernetes-dashboardnamespace:kube-systemlabels:k8s-app:kubernetes-dashboardkubernetes.io/cluster-service:"true"addonmanager.kubernetes.io/mode:Reconcilespec:type:NodePort#添加这一行内容selector:k8s-app:kubernetes-dashboardports:-port:443targetPort:84432)执行所有定义文件需要提前FQ将k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1镜像下载下来,然后上传到node节点上,然后执行"dockerload......"导入到node节点的images镜像里或者从微软中国提供的gcr.io免费代理下载被墙的镜像,然后在修改yaml文件里更新dashboard的镜像下载地址[root@k8s-master01dashboard]#fgrep"image"./*./dashboard-controller.yaml:image:k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1[root@k8s-master01dashboard]#ls*.yamldashboard-configmap.yamldashboard-controller.yamldashboard-rbac.yamldashboard-secret.yamldashboard-service.yaml[root@k8s-master01dashboard]#kubectlapply-f.3)查看分配的NodePort[root@k8s-master01dashboard]#kubectlgetdeploymentkubernetes-dashboard-nkube-systemNAMEREADYUP-TO-DATEAVAILABLEAGEkubernetes-dashboard1/11148s[root@k8s-master01dashboard]#kubectl--namespacekube-systemgetpods-owideNAMEREADYSTATUSRESTARTSAGEIPNODENOMINATEDNODEREADINESSGATEScoredns-5b969f4c88-pd5js1/1Running033m172.30.72.3k8s-node03<none><none>kubernetes-dashboard-85bcf5dbf8-8s7hm1/1Running063s172.30.72.6k8s-node03<none><none>[root@k8s-master01dashboard]#kubectlgetserviceskubernetes-dashboard-nkube-systemNAMETYPECLUSTER-IPEXTERNAL-IPPORT(S)AGEkubernetes-dashboardNodePort10.254.164.208<none>443:30284/TCP104s可以看出:NodePort30284映射到dashboardpod443端口;4)查看dashboard支持的命令行参数[root@k8s-master01dashboard]#kubectlexec--namespacekube-system-itkubernetes-dashboard-85bcf5dbf8-8s7hm--/dashboard--help2019/06/2516:54:04StartingoverwatchUsageof/dashboard:--alsologtostderrlogtostandarderroraswellasfiles--api-log-levelstringLevelofAPIrequestlogging.Shouldbeoneof'INFO|NONE|DEBUG'.Default:'INFO'.(default"INFO")--apiserver-hoststringTheaddressoftheKubernetesApiservertoconnecttointheformatofprotocol://address:port,e.g.,http://localhost:8080.Ifnotspecified,theassumptionisthatthebinaryrunsinsideaKubernetesclusterandlocaldiscoveryisattempted.--authentication-modestringsEnablesauthenticationoptionsthatwillbereflectedonloginscreen.Supportedvalues:token,basic.Default:token.Notethatbasicoptionshouldonlybeusedifapiserverhas'--authorization-mode=ABAC'and'--basic-auth-file'flagsset.(default[token])--auto-generate-certificatesWhensettotrue,DashboardwillautomaticallygeneratecertificatesusedtoserveHTTPS.Default:false.--bind-addressipTheIPaddressonwhichtoservethe--secure-port(setto0.0.0.0forallinterfaces).(default0.0.0.0)--default-cert-dirstringDirectorypathcontaining'--tls-cert-file'and'--tls-key-file'files.Usedalsowhenauto-generatingcertificatesflagisset.(default"/certs")--disable-settings-authorizerWhenenabled,Dashboardsettingspagewillnotrequireusertobeloggedinandauthorizedtoaccesssettingspage.--enable-insecure-loginWhenenabled,DashboardloginviewwillalsobeshownwhenDashboardisnotservedoverHTTPS.Default:false.--enable-skip-loginWhenenabled,theskipbuttonontheloginpagewillbeshown.Default:false.--heapster-hoststringTheaddressoftheHeapsterApiservertoconnecttointheformatofprotocol://address:port,e.g.,http://localhost:8082.Ifnotspecified,theassumptionisthatthebinaryrunsinsideaKubernetesclusterandserviceproxywillbeused.--insecure-bind-addressipTheIPaddressonwhichtoservethe--port(setto0.0.0.0forallinterfaces).(default127.0.0.1)--insecure-portintTheporttolistentoforincomingHTTPrequests.(default9090)--kubeconfigstringPathtokubeconfigfilewithauthorizationandmasterlocationinformation.--log_backtrace_attraceLocationwhenlogginghitslinefile:N,emitastacktrace(default:0)--log_dirstringIfnon-empty,writelogfilesinthisdirectory--logtostderrlogtostandarderrorinsteadoffiles--metric-client-check-periodintTimeinsecondsthatdefineshowoftenconfiguredmetricclienthealthcheckshouldberun.Default:30seconds.(default30)--portintThesecureporttolistentoforincomingHTTPSrequests.(default8443)--stderrthresholdseveritylogsatorabovethisthresholdgotostderr(default2)--system-bannerstringWhennon-emptydisplaysmessagetoDashboardusers.AcceptssimpleHTMLtags.Default:''.--system-banner-severitystringSeverityofsystembanner.Shouldbeoneof'INFO|WARNING|ERROR'.Default:'INFO'.(default"INFO")--tls-cert-filestringFilecontainingthedefaultx509CertificateforHTTPS.--tls-key-filestringFilecontainingthedefaultx509privatekeymatching--tls-cert-file.--token-ttlintExpirationtime(inseconds)ofJWEtokensgeneratedbydashboard.Default:15min.0-neverexpires(default900)-v,--vLevelloglevelforVlogs--vmodulemoduleSpeccomma-separatedlistofpattern=Nsettingsforfile-filteredloggingpflag:helprequestedcommandterminatedwithexitcode25)访问dashboard从1.7版本开始,dashboard只允许通过https访问,如果使用kubeproxy则必须监听localhost或127.0.0.1。对于NodePort没有这个限制,但是仅建议在开发环境中使用。对于不满足这些条件的登录访问,在登录成功后浏览器不跳转,始终停在登录界面。有三种访问dashboard的方式:->kubernetes-dashboard服务暴露了NodePort,可以使用https://NodeIP:NodePort地址访问dashboard;->通过kube-apiserver访问dashboard;->通过kubectlproxy访问dashboard:第一种方式:kubernetes-dashboard服务暴露了NodePort端口,可以通过https://NodeIP+NodePort来访问dashboard[root@k8s-master01dashboard]#kubectlgetserviceskubernetes-dashboard-nkube-systemNAMETYPECLUSTER-IPEXTERNAL-IPPORT(S)AGEkubernetes-dashboardNodePort10.254.164.208<none>443:30284/TCP14m则可以通过访问https://172.16.60.244:30284,https://172.16.60.245:30284,https://172.16.60.246:30284来打开dashboard界面第二种方式:通过kubectlproxy访问dashboard启动代理(下面命令会一直在前台执行,可以选择使用tmux虚拟终端执行)[root@k8s-master01dashboard]#kubectlproxy--address='localhost'--port=8086--accept-hosts='^*$'Startingtoserveon127.0.0.1:8086需要注意:--address必须为localhost或127.0.0.1;需要指定--accept-hosts选项,否则浏览器访问dashboard页面时提示“Unauthorized”;这样就可以在这个服务器的浏览器里访问URL:http://127.0.0.1:8086/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy第三种方式:通过kube-apiserver访问dashboard获取集群服务地址列表:[root@k8s-master01dashboard]#kubectlcluster-infoKubernetesmasterisrunningathttps://172.16.60.250:8443CoreDNSisrunningathttps://172.16.60.250:8443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxykubernetes-dashboardisrunningathttps://172.16.60.250:8443/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxyTofurtherdebuganddiagnoseclusterproblems,use'kubectlcluster-infodump'.需要注意:必须通过kube-apiserver的安全端口(https)访问dashbaord,访问时浏览器需要使用自定义证书,否则会被kube-apiserver拒绝访问。创建和导入自定义证书的操作已经在前面"部署node工作节点"环节介绍过了,这里就略过了~~~浏览器访问URL:https://172.16.60.250:8443/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy即可打开dashboard界面6)创建登录Dashboard的token和kubeconfig配置文件dashboard默认只支持token认证(不支持client证书认证),所以如果使用Kubeconfig文件,需要将token写入到该文件。方法一:创建登录token[root@k8s-master01~]#kubectlcreatesadashboard-admin-nkube-systemserviceaccount/dashboard-admincreated[root@k8s-master01~]#kubectlcreateclusterrolebindingdashboard-admin--clusterrole=cluster-admin--serviceaccount=kube-system:dashboard-adminclusterrolebinding.rbac.authorization.k8s.io/dashboard-admincreated[root@k8s-master01~]#ADMIN_SECRET=$(kubectlgetsecrets-nkube-system|grepdashboard-admin|awk'{print$1}')[root@k8s-master01~]#DASHBOARD_LOGIN_TOKEN=$(kubectldescribesecret-nkube-system${ADMIN_SECRET}|grep-E'^token'|awk'{print$2}')[root@k8s-master01~]#echo${DASHBOARD_LOGIN_TOKEN}eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tcmNicnMiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZGQ1Njg0OGUtOTc2Yi0xMWU5LTkwZDQtMDA1MDU2YWM3YzgxIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.Kwh_zhI-dA8kIfs7DRmNecS_pCXQ3B2ujS_eooR-Gvoaz29cJTzD_Z67bRDS1qlJ8oyIQjW2_m837EkUCpJ8LRiOnTMjwBPMeBPHHomDGdSmdj37UEc7YQa5AmkvVWIYiUKgTHJjgLaKlk6eH7Ihvcez3IBHWTFXlULu24mlMt9XP4J7M5fIg7I5-ctfLIbV2NsvWLwiv6JAECocbGX1w0fJTmn9LlheiDQP1ByxU_WavsFYWOYPEqdUQbqcZ7iovT1ZUVyFuGS5rxzSHm86tcK_ptEinYO1dGLjMrLRZ3tB1OAOW8_u-VnHqsNwKjbZJNUljfzCGy1YoI2xUB7V4w则可以使用上面输出的token登录Dashboard。方法二:创建使用token的KubeConfig文件(推荐使用这种方式)[root@k8s-master01~]#source/opt/k8s/bin/environment.sh设置集群参数[root@k8s-master01~]#kubectlconfigset-clusterkubernetes\--certificate-authority=/etc/kubernetes/cert/ca.pem\--embed-certs=true\--server=${KUBE_APISERVER}\--kubeconfig=dashboard.kubeconfig设置客户端认证参数,使用上面创建的Token[root@k8s-master01~]#kubectlconfigset-credentialsdashboard_user\--token=${DASHBOARD_LOGIN_TOKEN}\--kubeconfig=dashboard.kubeconfig设置上下文参数[root@k8s-master01~]#kubectlconfigset-contextdefault\--cluster=kubernetes\--user=dashboard_user\--kubeconfig=dashboard.kubeconfig设置默认上下文[root@k8s-master01~]#kubectlconfiguse-contextdefault--kubeconfig=dashboard.kubeconfig将上面生成的dashboard.kubeconfig文件拷贝到本地,然后使用这个文件登录Dashboard。[root@k8s-master01~]#lldashboard.kubeconfig-rw-------1rootroot3025Jun2601:14dashboard.kubeconfig

这里由于缺少Heapster或metrics-server插件,当前dashboard还不能展示 Pod、Nodes 的 CPU、内存等统计数据和图表。

11.3 - 部署 metrics-server 插件

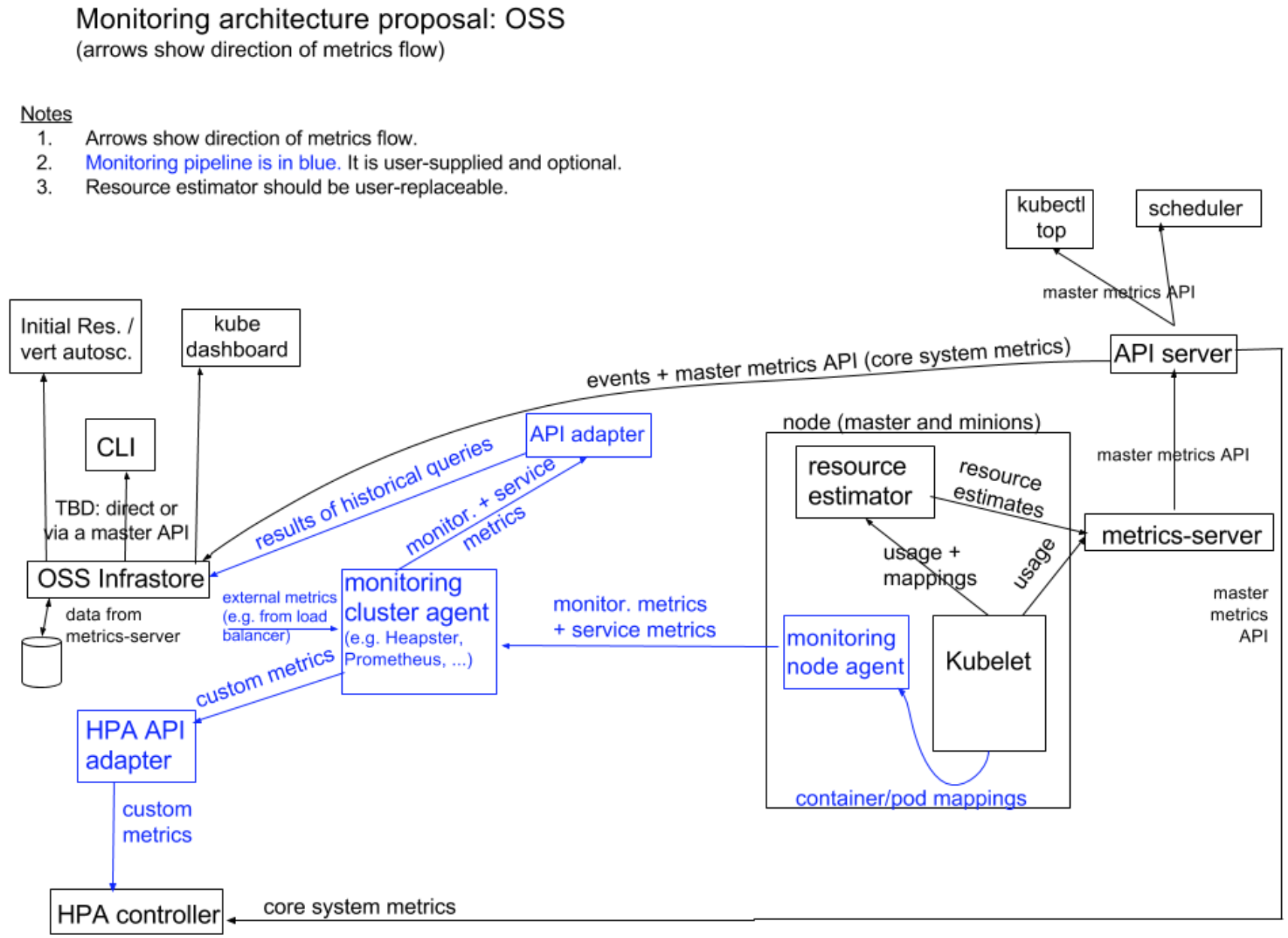

metrics-server 通过 kube-apiserver 发现所有节点,然后调用 kubelet APIs(通过 https 接口)获得各节点(Node)和 Pod 的 CPU、Memory 等资源使用情况。从 Kubernetes 1.12 开始,kubernetes 的安装脚本移除了 Heapster,从 1.13 开始完全移除了对 Heapster 的支持,Heapster 不再被维护。替代方案如下:

->用于支持自动扩缩容的 CPU/memory HPA metrics:metrics-server;

->通用的监控方案:使用第三方可以获取 Prometheus 格式监控指标的监控系统,如 Prometheus Operator;

->事件传输:使用第三方工具来传输、归档 kubernetes events;

从 Kubernetes 1.8 开始,资源使用指标(如容器 CPU 和内存使用率)通过 Metrics API 在 Kubernetes 中获取, metrics-server 替代了heapster。Metrics Server 实现了Resource Metrics API,Metrics Server是集群范围资源使用数据的聚合器。Metrics Server 从每个节点上的 Kubelet 公开的 Summary API 中采集指标信息。

在了解Metrics-Server之前,必须要事先了解下Metrics API的概念。Metrics API相比于之前的监控采集方式(hepaster)是一种新的思路,官方希望核心指标的监控应该是稳定的,版本可控的,且可以直接被用户访问(例如通过使用 kubectl top 命令),或由集群中的控制器使用(如HPA),和其他的Kubernetes APIs一样。官方废弃heapster项目,就是为了将核心资源监控作为一等公民对待,即像pod、service那样直接通过api-server或者client直接访问,不再是安装一个hepater来汇聚且由heapster单独管理。

假设每个pod和node我们收集10个指标,从k8s的1.6开始,支持5000节点,每个节点30个pod,假设采集粒度为1分钟一次,则"10 x 5000 x 30 / 60 = 25000 平均每分钟2万多个采集指标"。因为k8s的api-server将所有的数据持久化到了etcd中,显然k8s本身不能处理这种频率的采集,而且这种监控数据变化快且都是临时数据,因此需要有一个组件单独处理他们,k8s版本只存放部分在内存中,于是metric-server的概念诞生了。其实hepaster已经有暴露了api,但是用户和Kubernetes的其他组件必须通过master proxy的方式才能访问到,且heapster的接口不像api-server一样,有完整的鉴权以及client集成。

有了Metrics Server组件,也采集到了该有的数据,也暴露了api,但因为api要统一,如何将请求到api-server的/apis/metrics请求转发给Metrics Server呢,

解决方案就是:kube-aggregator,在k8s的1.7中已经完成,之前Metrics Server一直没有面世,就是耽误在了kube-aggregator这一步。

kube-aggregator(聚合api)主要提供:

->Provide an API for registering API servers;

->Summarize discovery information from all the servers;

->Proxy client requests to individual servers;

Metric API的使用:

->Metrics API 只可以查询当前的度量数据,并不保存历史数据

->Metrics API URI 为 /apis/metrics.k8s.io/,在 k8s.io/metrics 维护

->必须部署 metrics-server 才能使用该 API,metrics-server 通过调用 Kubelet Summary API 获取数据

Metrics server定时从Kubelet的Summary API(类似/ap1/v1/nodes/nodename/stats/summary)采集指标信息,这些聚合过的数据将存储在内存中,且以metric-api的形式暴露出去。Metrics server复用了api-server的库来实现自己的功能,比如鉴权、版本等,为了实现将数据存放在内存中吗,去掉了默认的etcd存储,引入了内存存储(即实现Storage interface)。因为存放在内存中,因此监控数据是没有持久化的,可以通过第三方存储来拓展,这个和heapster是一致的。

Kubernetes Dashboard 还不支持 metrics-server,如果使用 metrics-server 替代 Heapster,将无法在 dashboard 中以图形展示 Pod 的内存和 CPU 情况,需要通过 Prometheus、Grafana 等监控方案来弥补。kuberntes 自带插件的 manifests yaml 文件使用 gcr.io 的 docker registry,国内被墙,需要手动替换为其它 registry 地址(本文档未替换);可以从微软中国提供的 gcr.io 免费代理下载被墙的镜像;下面部署命令均在k8s-master01节点上执行。

监控架构

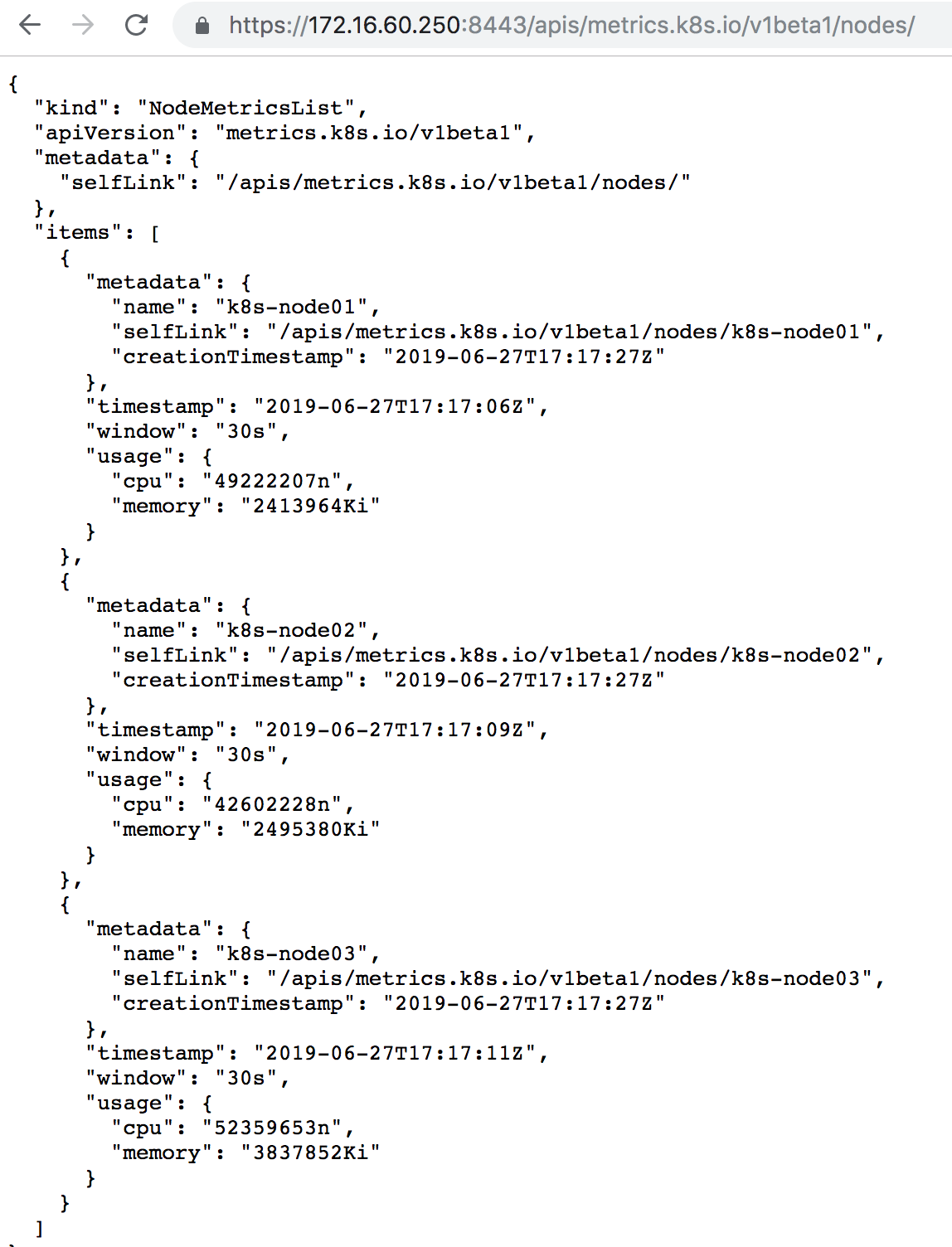

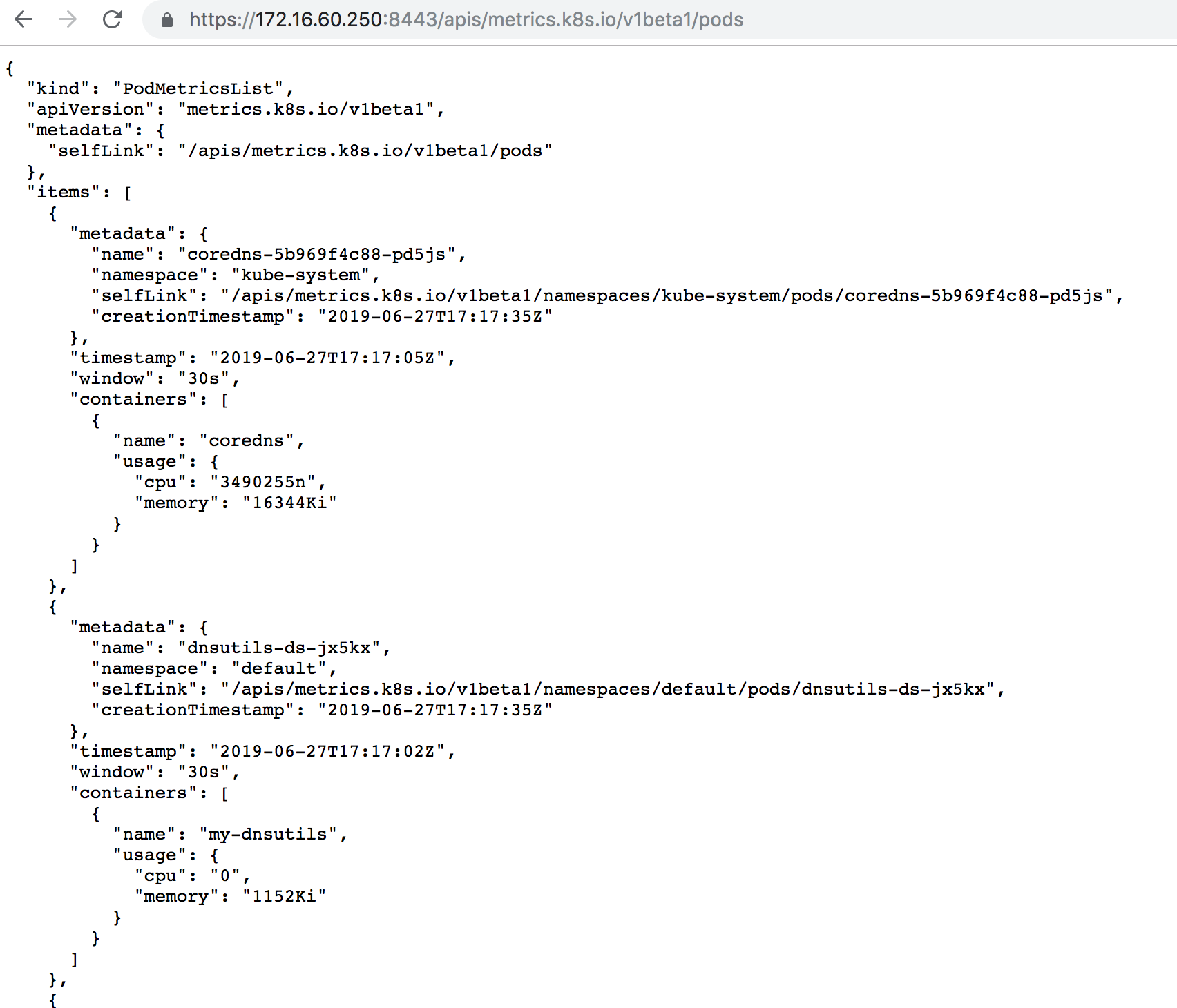

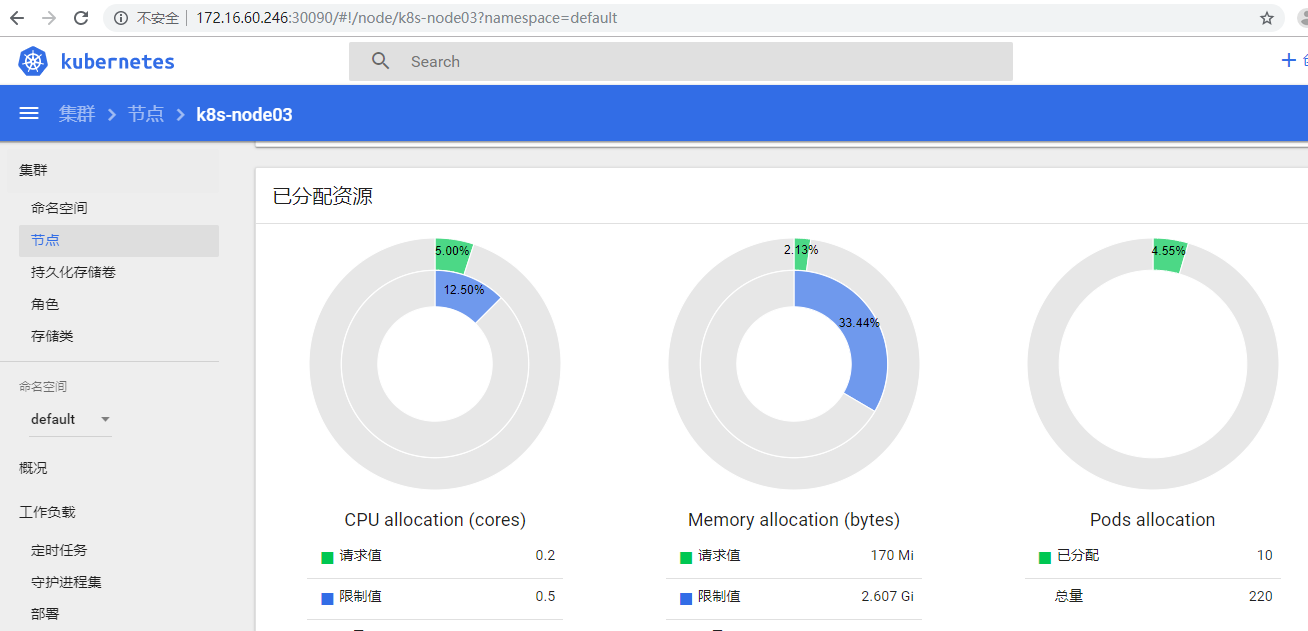

1)安装metrics-server从githubclone源码:[root@k8s-master01~]#cd/opt/k8s/work/[root@k8s-master01work]#gitclonehttps://github.com/kubernetes-incubator/metrics-server.git[root@k8s-master01work]#cdmetrics-server/deploy/1.8+/[root@k8s-master011.8+]#lsaggregated-metrics-reader.yamlauth-reader.yamlmetrics-server-deployment.yamlresource-reader.yamlauth-delegator.yamlmetrics-apiservice.yamlmetrics-server-service.yaml修改metrics-server-deployment.yaml文件,为metrics-server添加三个命令行参数(在"imagePullPolicy"行的下面添加):[root@k8s-master011.8+]#cpmetrics-server-deployment.yamlmetrics-server-deployment.yaml.bak[root@k8s-master011.8+]#vimmetrics-server-deployment.yaml.........args:---metric-resolution=30s---kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP这里需要注意:--metric-resolution=30s:从kubelet采集数据的周期;--kubelet-preferred-address-types:优先使用InternalIP来访问kubelet,这样可以避免节点名称没有DNS解析记录时,通过节点名称调用节点kubeletAPI失败的情况(未配置时默认的情况);另外:需要提前FQ将k8s.gcr.io/metrics-server-amd64:v0.3.3镜像下载下来,然后上传到node节点上,然后执行"dockerload......"导入到node节点的images镜像里或者从微软中国提供的gcr.io免费代理下载被墙的镜像,然后在修改yaml文件里更新dashboard的镜像下载地址.[root@k8s-master011.8+]#fgrep"image"metrics-server-deployment.yaml#mountintmpsowecansafelyusefrom-scratchimagesand/orread-onlycontainersimage:k8s.gcr.io/metrics-server-amd64:v0.3.3imagePullPolicy:Always由于已经提前将相应镜像导入到各node节点的image里了,所以需要将metrics-server-deployment.yaml文件中的镜像拉取策略修改为"IfNotPresent".即:本地有则使用本地镜像,不拉取[root@k8s-master011.8+]#fgrep"image"metrics-server-deployment.yaml#mountintmpsowecansafelyusefrom-scratchimagesand/orread-onlycontainersimage:k8s.gcr.io/metrics-server-amd64:v0.3.3imagePullPolicy:IfNotPresent部署metrics-server:[root@k8s-master011.8+]#kubectlcreate-f.2)查看运行情况[root@k8s-master011.8+]#kubectl-nkube-systemgetpods-lk8s-app=metrics-serverNAMEREADYSTATUSRESTARTSAGEmetrics-server-54997795d9-4cv6h1/1Running050s[root@k8s-master011.8+]#kubectlgetsvc-nkube-systemmetrics-serverNAMETYPECLUSTER-IPEXTERNAL-IPPORT(S)AGEmetrics-serverClusterIP10.254.238.208<none>443/TCP65s3)metrics-server的命令行参数(在任意一个node节点上执行下面命令)[root@k8s-node01~]#dockerrun-it--rmk8s.gcr.io/metrics-server-amd64:v0.3.3--help4)查看metrics-server输出的metrics->通过kube-apiserver或kubectlproxy访问:https://172.16.60.250:8443/apis/metrics.k8s.io/v1beta1/nodeshttps://172.16.60.250:8443/apis/metrics.k8s.io/v1beta1/nodes/https://172.16.60.250:8443/apis/metrics.k8s.io/v1beta1/podshttps://172.16.60.250:8443/apis/metrics.k8s.io/v1beta1/namespace//pods/->直接使用kubectl命令访问:#kubectlget--rawapis/metrics.k8s.io/v1beta1/nodes#kubectlget--rawapis/metrics.k8s.io/v1beta1/podskubectl#get--rawapis/metrics.k8s.io/v1beta1/nodes/kubectl#get--rawapis/metrics.k8s.io/v1beta1/namespace//pods/[root@k8s-master011.8+]#kubectlget--raw"/apis/metrics.k8s.io/v1beta1"|jq.{"kind":"APIResourceList","apiVersion":"v1","groupVersion":"metrics.k8s.io/v1beta1","resources":[{"name":"nodes","singularName":"","namespaced":false,"kind":"NodeMetrics","verbs":["get","list"]},{"name":"pods","singularName":"","namespaced":true,"kind":"PodMetrics","verbs":["get","list"]}]}[root@k8s-master011.8+]#kubectlget--raw"/apis/metrics.k8s.io/v1beta1/nodes"|jq.{"kind":"NodeMetricsList","apiVersion":"metrics.k8s.io/v1beta1","metadata":{"selfLink":"/apis/metrics.k8s.io/v1beta1/nodes"},"items":[{"metadata":{"name":"k8s-node01","selfLink":"/apis/metrics.k8s.io/v1beta1/nodes/k8s-node01","creationTimestamp":"2019-06-27T17:11:43Z"},"timestamp":"2019-06-27T17:11:36Z","window":"30s","usage":{"cpu":"47615396n","memory":"2413536Ki"}},{"metadata":{"name":"k8s-node02","selfLink":"/apis/metrics.k8s.io/v1beta1/nodes/k8s-node02","creationTimestamp":"2019-06-27T17:11:43Z"},"timestamp":"2019-06-27T17:11:38Z","window":"30s","usage":{"cpu":"42000411n","memory":"2496152Ki"}},{"metadata":{"name":"k8s-node03","selfLink":"/apis/metrics.k8s.io/v1beta1/nodes/k8s-node03","creationTimestamp":"2019-06-27T17:11:43Z"},"timestamp":"2019-06-27T17:11:40Z","window":"30s","usage":{"cpu":"54095172n","memory":"3837404Ki"}}]}这里需要注意:/apis/metrics.k8s.io/v1beta1/nodes和/apis/metrics.k8s.io/v1beta1/pods返回的usage包含CPU和Memory;5)使用kubectltop命令查看集群节点资源使用情况[root@k8s-master011.8+]#kubectltopnodeNAMECPU(cores)CPU%MEMORY(bytes)MEMORY%k8s-node0145m1%2357Mi61%k8s-node0244m1%2437Mi63%k8s-node0354m1%3747Mi47%=======================================================================================================================================报错解决:[root@k8s-master011.8+]#kubectltopnodeErrorfromserver(Forbidden):nodes.metrics.k8s.ioisforbidden:User"aggregator"cannotlistresource"nodes"inAPIgroup"metrics.k8s.io"attheclusterscope出现上述错误的原因主要是未对aggregator这个sa进行rbac授权!偷懒的解决方案,直接将这个sa和cluster-admin进行绑定,但不符合最小权限原则。[root@k8s-master011.8+]#kubectlcreateclusterrolebindingcustom-metric-with-cluster-admin--clusterrole=cluster-admin--user=aggregator

11.4 - 部署 kube-state-metrics 插件

上面已经部署了metric-server,几乎容器运行的大多数指标数据都能采集到了,但是下面这种情况的指标数据的采集却无能为力:

->调度了多少个replicas?现在可用的有几个?

->多少个Pod是running/stopped/terminated状态?

->Pod重启了多少次?

->当前有多少job在运行中?

这些则是kube-state-metrics提供的内容,它是K8S的一个附加服务,基于client-go开发的。它会轮询Kubernetes API,并将Kubernetes的结构化信息转换为metrics。kube-state-metrics能够采集绝大多数k8s内置资源的相关数据,例如pod、deploy、service等等。同时它也提供自己的数据,主要是资源采集个数和采集发生的异常次数统计。

kube-state-metrics 指标类别包括:

CronJob Metrics

DaemonSet Metrics

Deployment Metrics

Job Metrics

LimitRange Metrics

Node Metrics

PersistentVolume Metrics

PersistentVolumeClaim Metrics

Pod Metrics

Pod Disruption Budget Metrics

ReplicaSet Metrics

ReplicationController Metrics

ResourceQuota Metrics

Service Metrics

StatefulSet Metrics

Namespace Metrics

Horizontal Pod Autoscaler Metrics

Endpoint Metrics

Secret Metrics

ConfigMap Metrics

以pod为例的指标有:

kube_pod_info

kube_pod_owner

kube_pod_status_running

kube_pod_status_ready

kube_pod_status_scheduled

kube_pod_container_status_waiting

kube_pod_container_status_terminated_reason

..............

kube-state-metrics与metric-server (或heapster)的对比

1)metric-server是从api-server中获取cpu,内存使用率这种监控指标,并把它们发送给存储后端,如influxdb或云厂商,它当前的核心作用是:为HPA等组件提供决策指标支持。

2)kube-state-metrics关注于获取k8s各种资源的最新状态,如deployment或者daemonset,之所以没有把kube-state-metrics纳入到metric-server的能力中,是因为它们的关注点本质上是不一样的。metric-server仅仅是获取、格式化现有数据,写入特定的存储,实质上是一个监控系统。而kube-state-metrics是将k8s的运行状况在内存中做了个快照,并且获取新的指标,但它没有能力导出这些指标

3)换个角度讲,kube-state-metrics本身是metric-server的一种数据来源,虽然现在没有这么做。

4)另外,像Prometheus这种监控系统,并不会去用metric-server中的数据,它都是自己做指标收集、集成的(Prometheus包含了metric-server的能力),但Prometheus可以监控metric-server本身组件的监控状态并适时报警,这里的监控就可以通过kube-state-metrics来实现,如metric-serverpod的运行状态。

kube-state-metrics本质上是不断轮询api-server,其性能优化:

kube-state-metrics在之前的版本中暴露出两个问题:

1)/metrics接口响应慢(10-20s)

2)内存消耗太大,导致超出limit被杀掉

问题一的方案:就是基于client-go的cache tool实现本地缓存,具体结构为:var cache = map[uuid][]byte{}

问题二的的方案是:对于时间序列的字符串,是存在很多重复字符的(如namespace等前缀筛选),可以用指针或者结构化这些重复字符。

kube-state-metrics优化点和问题

1)因为kube-state-metrics是监听资源的add、delete、update事件,那么在kube-state-metrics部署之前已经运行的资源的数据是不是就拿不到了?其实kube-state-metric利用client-go可以初始化所有已经存在的资源对象,确保没有任何遗漏;

2)kube-state-metrics当前不会输出metadata信息(如help和description);

3)缓存实现是基于golang的map,解决并发读问题当期是用了一个简单的互斥锁,应该可以解决问题,后续会考虑golang的sync.Map安全map;

4)kube-state-metrics通过比较resource version来保证event的顺序;

5)kube-state-metrics并不保证包含所有资源;

下面部署命令均在k8s-master01节点上执行。

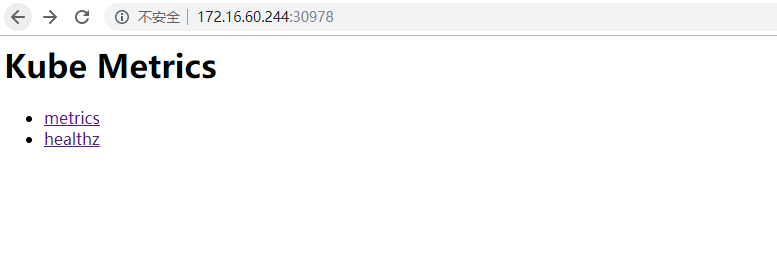

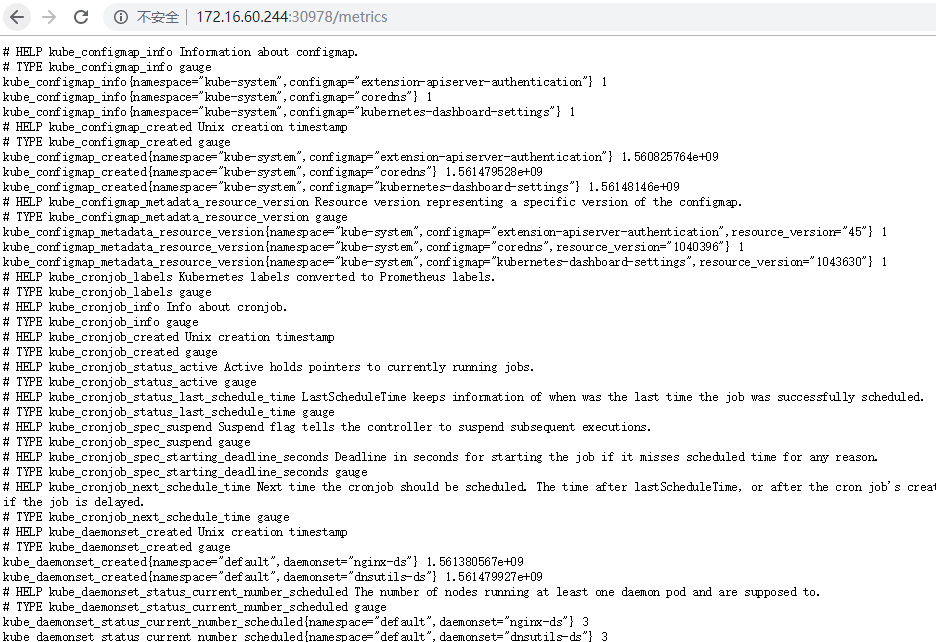

1)修改配置文件将下载的kube-state-metrics.tar.gz放到/opt/k8s/work目录下解压[root@k8s-master01~]#cd/opt/k8s/work/[root@k8s-master01work]#tar-zvxfkube-state-metrics.tar.gz[root@k8s-master01work]#cdkube-state-metricskube-state-metrics目录下,有所需要的文件[root@k8s-master01kube-state-metrics]#lltotal32-rw-rw-r--1rootroot362May617:31kube-state-metrics-cluster-role-binding.yaml-rw-rw-r--1rootroot1076May617:31kube-state-metrics-cluster-role.yaml-rw-rw-r--1rootroot1657Jul117:35kube-state-metrics-deployment.yaml-rw-rw-r--1rootroot381May617:31kube-state-metrics-role-binding.yaml-rw-rw-r--1rootroot508May617:31kube-state-metrics-role.yaml-rw-rw-r--1rootroot98May617:31kube-state-metrics-service-account.yaml-rw-rw-r--1rootroot404May617:31kube-state-metrics-service.yaml[root@k8s-master01kube-state-metrics]#fgrep-R"image"./*./kube-state-metrics-deployment.yaml:image:quay.io/coreos/kube-state-metrics:v1.5.0./kube-state-metrics-deployment.yaml:imagePullPolicy:IfNotPresent./kube-state-metrics-deployment.yaml:image:k8s.gcr.io/addon-resizer:1.8.3./kube-state-metrics-deployment.yaml:imagePullPolicy:IfNotPresent[root@k8s-master01kube-state-metrics]#catkube-state-metrics-service.yamlapiVersion:v1kind:Servicemetadata:name:kube-state-metricsnamespace:kube-systemlabels:k8s-app:kube-state-metricsannotations:prometheus.io/scrape:'true'spec:ports:-name:http-metricsport:8080targetPort:http-metricsprotocol:TCP-name:telemetryport:8081targetPort:telemetryprotocol:TCPtype:NodePort#添加这一行selector:k8s-app:kube-state-metrics注意两点:其中有个是镜像是"k8s.gcr.io/addon-resizer:1.8.3"在国内因为某些原因无法拉取,可以更换为"ist0ne/addon-resizer"即可正常使用。或者通过FQ下载。service如果需要集群外部访问,需要改为NodePort2)执行所有定义文件需要提前FQ将quay.io/coreos/kube-state-metrics:v1.5.0和k8s.gcr.io/addon-resizer:1.8.3镜像下载下来,然后上传到node节点上,然后执行"dockerload......"导入到node节点的images镜像里或者从微软中国提供的gcr.io免费代理下载被墙的镜像,然后在修改yaml文件里更新dashboard的镜像下载地址。由于已经提前将相应镜像导入到各node节点的image里了,所以需要将kube-state-metrics-deployment.yaml文件中的镜像拉取策略修改为"IfNotPresent".即本地有则使用本地镜像,不拉取。[root@k8s-master01kube-state-metrics]#kubectlcreate-f.执行后检查一下:[root@k8s-master01kube-state-metrics]#kubectlgetpod-nkube-system|grepkube-state-metricskube-state-metrics-5dd55c764d-nnsdv2/2Running09m3s[root@k8s-master01kube-state-metrics]#kubectlgetsvc-nkube-system|grepkube-state-metricskube-state-metricsNodePort10.254.228.212<none>8080:30978/TCP,8081:30872/TCP9m14s[root@k8s-master01kube-state-metrics]#kubectlgetpod,svc-nkube-system|grepkube-state-metricspod/kube-state-metrics-5dd55c764d-nnsdv2/2Running09m12sservice/kube-state-metricsNodePort10.254.228.212<none>8080:30978/TCP,8081:30872/TCP9m18s3)验证kube-state-metrics数据采集通过上面的检查,可以得知映射到外部访问的NodePort端口是30978,通过任意一个node工作节点即可验证访问:[root@k8s-master01kube-state-metrics]#curlhttp://172.16.60.244:30978/metrics|head-10%Total%Received%XferdAverageSpeedTimeTimeTimeCurrentDloadUploadTotalSpentLeftSpeed00000000--:--:----:--:----:--:--0#HELPkube_configmap_infoInformationaboutconfigmap.#TYPEkube_configmap_infogaugekube_configmap_info{namespace="kube-system",configmap="extension-apiserver-authentication"}1kube_configmap_info{namespace="kube-system",configmap="coredns"}1kube_configmap_info{namespace="kube-system",configmap="kubernetes-dashboard-settings"}1#HELPkube_configmap_createdUnixcreationtimestamp#TYPEkube_configmap_createdgaugekube_configmap_created{namespace="kube-system",configmap="extension-apiserver-authentication"}1.560825764e+09kube_configmap_created{namespace="kube-system",configmap="coredns"}1.561479528e+09kube_configmap_created{namespace="kube-system",configmap="kubernetes-dashboard-settings"}1.56148146e+0910073353073353009.8M0--:--:----:--:----:--:--11.6Mcurl:(23)Failedwritingbody(0!=2048)

5 - 部署 harbor 私有仓库

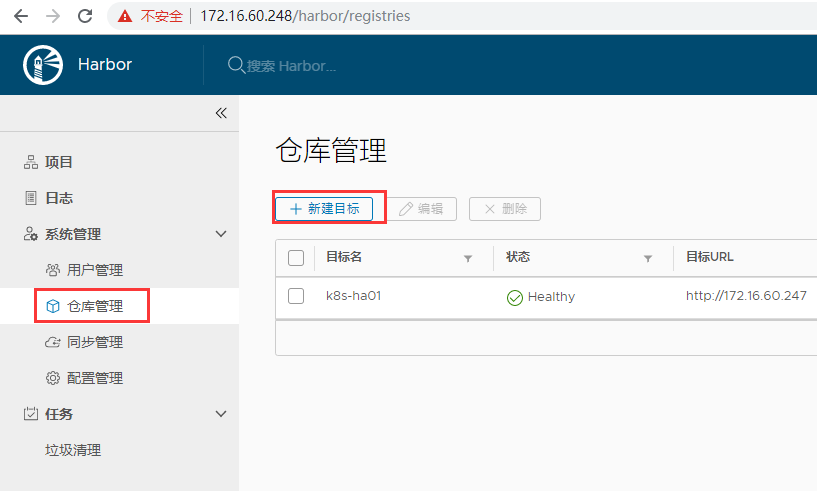

安装的话,可以参考Docker私有仓库Harbor介绍和部署方法详解,需要在两台节点机172.16.60.247、172.16.60.248上都安装harbor私有仓库环境。上层通过Nginx+Keepalived实现Harbor的负载均衡+高可用,两个Harbor相互同步(主主复制)。

harbor上远程同步的操作:

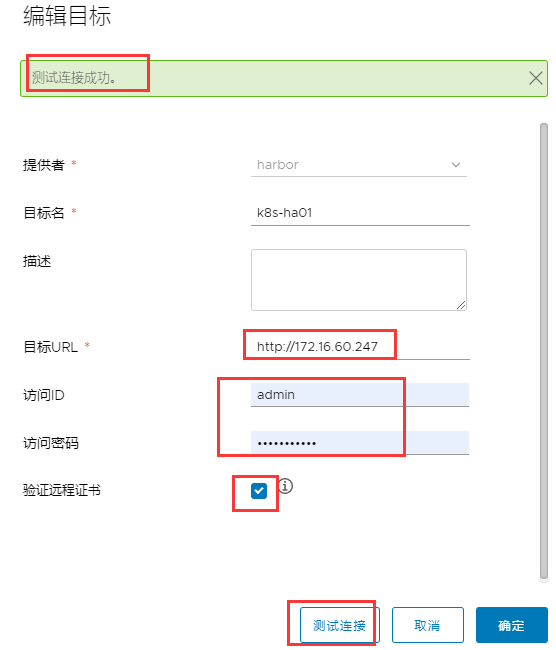

1)"仓库管理"创建目标,创建后可以测试是否正常连接目标。

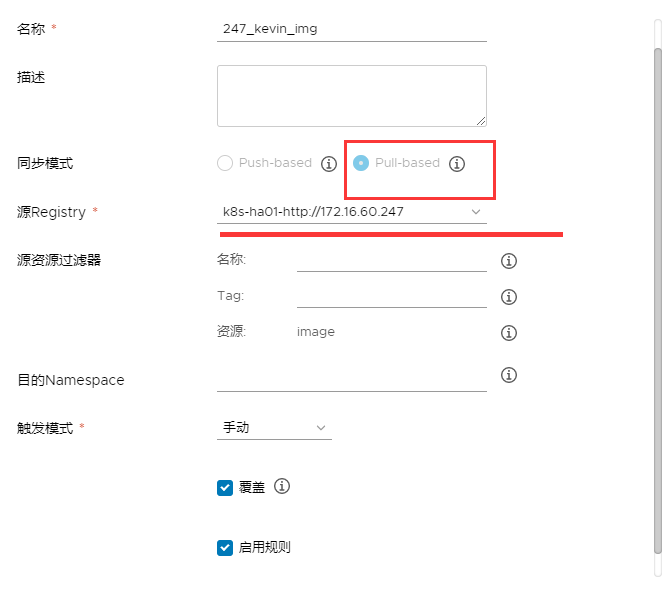

2)"同步管理"创建规则,在规则中调用上面创建的目标。

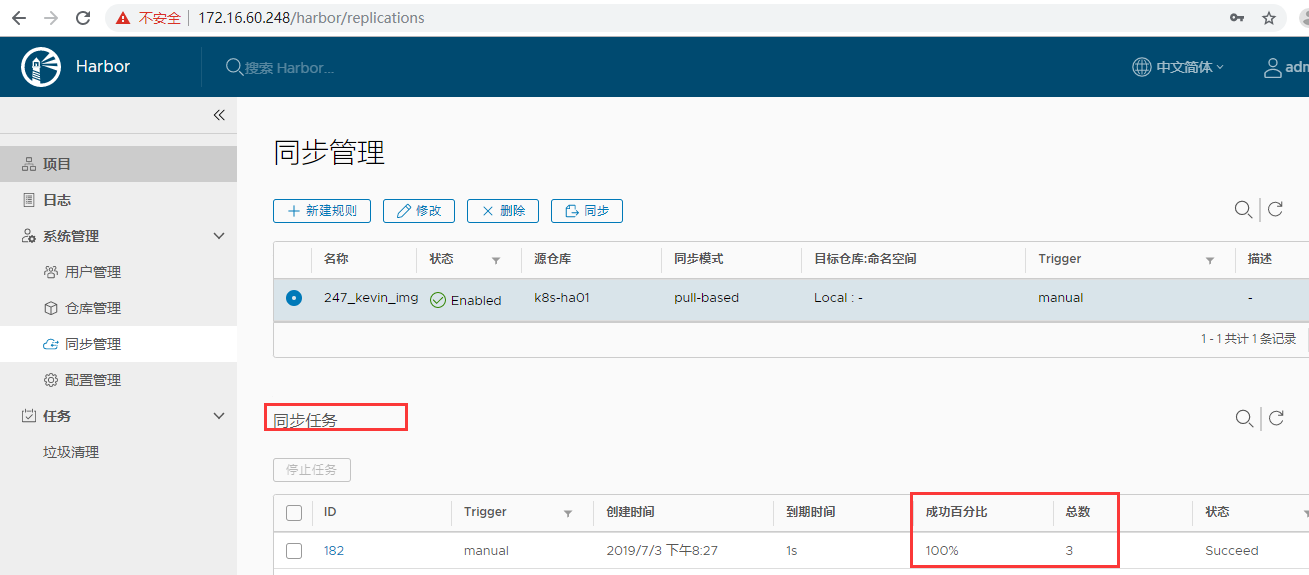

3)手动同步或定时同步。

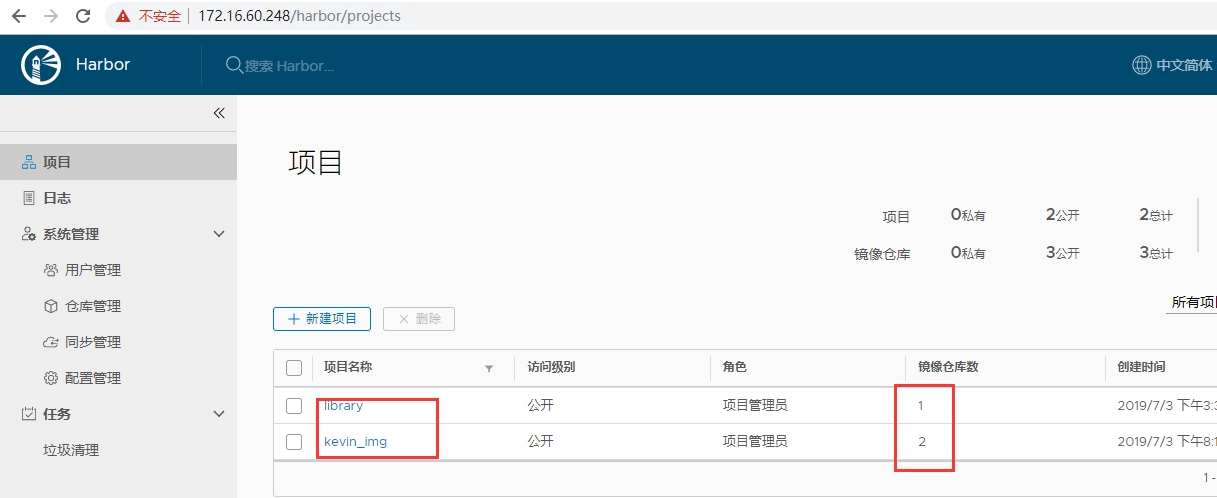

例如:已经在172.16.60.247这台harbor节点的私有仓库library和kevin_img的项目里各自存放了镜像,如下:

现在要把172.16.60.247的harbor私有仓库的这两个项目下的镜像同步到另一个节点172.16.60.248的harbor里。同步同步方式:147 -> 148或147 <- 148

上面是手动同步,也可以选择定时同步,分别填写的是"秒 分 时 日 月 周", 如下每两分钟同步一次! 则过了两分钟之后就会自动同步过来了~

6 - kubernetes集群管理测试

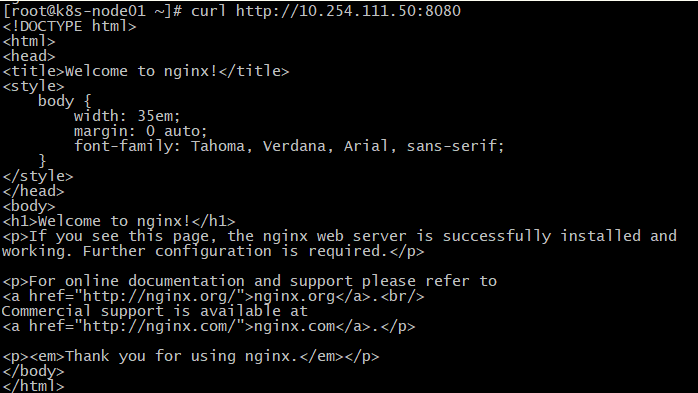

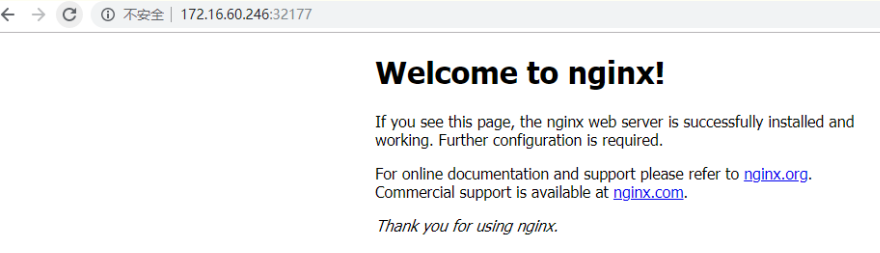

[root@k8s-master01~]#kubectlgetcsNAMESTATUSMESSAGEERRORschedulerHealthyokcontroller-managerHealthyoketcd-2Healthy{"health":"true"}etcd-0Healthy{"health":"true"}etcd-1Healthy{"health":"true"}[root@k8s-master01~]#kubectlgetnodesNAMESTATUSROLESAGEVERSIONk8s-node01Ready<none>20dv1.14.2k8s-node02Ready<none>20dv1.14.2k8s-node03Ready<none>20dv1.14.2部署测试实例[root@k8s-master01~]#kubectlrunkevin-nginx--image=nginx--replicas=3kubectlrun--generator=deployment/apps.v1isDEPRECATEDandwillberemovedinafutureversion.Usekubectlrun--generator=run-pod/v1orkubectlcreateinstead.deployment.apps/kevin-nginxcreated[root@k8s-master01~]#kubectlrun--generator=run-pod/v1kevin-nginx--image=nginx--replicas=3pod/kevin-nginxcreated稍等一会儿,查看创建的kevin-nginx的pod(由于创建时要自动下载nginx镜像,所以需要等待一段时间)[root@k8s-master01~]#kubectlgetpods--all-namespaces|grep"kevin-nginx"defaultkevin-nginx1/1Running098sdefaultkevin-nginx-569dcd559b-6h5nn1/1Running0106sdefaultkevin-nginx-569dcd559b-7f2b41/1Running0106sdefaultkevin-nginx-569dcd559b-7tds21/1Running0106s查看具体详细事件[root@k8s-master01~]#kubectlgetpods--all-namespaces-owide|grep"kevin-nginx"defaultkevin-nginx1/1Running02m13s172.30.72.12k8s-node03<none><none>defaultkevin-nginx-569dcd559b-6h5nn1/1Running02m21s172.30.56.7k8s-node02<none><none>defaultkevin-nginx-569dcd559b-7f2b41/1Running02m21s172.30.72.11k8s-node03<none><none>defaultkevin-nginx-569dcd559b-7tds21/1Running02m21s172.30.88.8k8s-node01<none><none>[root@k8s-master01~]#kubectlgetdeployment|grepkevin-nginxkevin-nginx3/3332m57s创建svc[root@k8s-master01~]#kubectlexposedeploymentkevin-nginx--port=8080--target-port=80--type=NodePort[root@k8s-master01~]#kubectlgetsvc|grepkevin-nginxnginxNodePort10.254.111.50<none>8080:32177/TCP33s集群内部,各pod之间访问kevin-nginx[root@k8s-master01~]#curlhttp://10.254.111.50:8080外部访问kevin-nginx的地址为http://node_ip/32177http://172.16.60.244:32177http://172.16.60.245:32177http://172.16.60.246:32177

7 - 清理kubernetes集群

1)清理 Node 节点 (node节点同样操作)

停相关进程:[root@k8s-node01~]#systemctlstopkubeletkube-proxyflannelddockerkube-proxykube-nginx清理文件:[root@k8s-node01~]#source/opt/k8s/bin/environment.shumountkubelet和docker挂载的目录[root@k8s-node01~]#mount|grep"${K8S_DIR}"|awk'{print$3}'|xargssudoumount删除kubelet工作目录[root@k8s-node01~]#sudorm-rf${K8S_DIR}/kubelet删除docker工作目录[root@k8s-node01~]#sudorm-rf${DOCKER_DIR}删除flanneld写入的网络配置文件[root@k8s-node01~]#sudorm-rf/var/run/flannel/删除docker的一些运行文件[root@k8s-node01~]#sudorm-rf/var/run/docker/删除systemdunit文件[root@k8s-node01~]#sudorm-rf/etc/systemd/system/{kubelet,docker,flanneld,kube-nginx}.service删除程序文件[root@k8s-node01~]#sudorm-rf/opt/k8s/bin/*删除证书文件[root@k8s-node01~]#sudorm-rf/etc/flanneld/cert/etc/kubernetes/cert清理kube-proxy和docker创建的iptables[root@k8s-node01~]#iptables-F&&sudoiptables-X&&sudoiptables-F-tnat&&sudoiptables-X-tnat删除flanneld和docker创建的网桥:[root@k8s-node01~]#iplinkdelflannel.1[root@k8s-node01~]#iplinkdeldocker02)清理 Master 节点 (master节点同样操作)

停相关进程:[root@k8s-master01~]#systemctlstopkube-apiserverkube-controller-managerkube-schedulerkube-nginx清理文件:删除systemdunit文件[root@k8s-master01~]#rm-rf/etc/systemd/system/{kube-apiserver,kube-controller-manager,kube-scheduler,kube-nginx}.service删除程序文件[root@k8s-master01~]#rm-rf/opt/k8s/bin/{kube-apiserver,kube-controller-manager,kube-scheduler}删除证书文件[root@k8s-master01~]#rm-rf/etc/flanneld/cert/etc/kubernetes/cert清理etcd集群[root@k8s-master01~]#systemctlstopetcd清理文件:[root@k8s-master01~]#source/opt/k8s/bin/environment.sh删除etcd的工作目录和数据目录[root@k8s-master01~]#rm-rf${ETCD_DATA_DIR}${ETCD_WAL_DIR}删除systemdunit文件[root@k8s-master01~]#rm-rf/etc/systemd/system/etcd.service删除程序文件[root@k8s-master01~]#rm-rf/opt/k8s/bin/etcd删除x509证书文件[root@k8s-master01~]#rm-rf/etc/etcd/cert/*上面部署的dashboard是https证书方式,如果是http方式访问的kubernetes集群web-ui,操作如下:

1)配置kubernetes-dashboard.yaml(里面的"k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1"镜像已经提前在node节点上下载了)[root@k8s-master01~]#cd/opt/k8s/work/[root@k8s-master01work]#catkubernetes-dashboard.yaml#-------------------DashboardSecret-------------------#apiVersion:v1kind:Secretmetadata:labels:k8s-app:kubernetes-dashboardname:kubernetes-dashboard-certsnamespace:kube-systemtype:Opaque---#-------------------DashboardServiceAccount-------------------#apiVersion:v1kind:ServiceAccountmetadata:labels:k8s-app:kubernetes-dashboardname:kubernetes-dashboardnamespace:kube-system---#-------------------DashboardRole&RoleBinding-------------------#kind:RoleapiVersion:rbac.authorization.k8s.io/v1metadata:name:kubernetes-dashboard-minimalnamespace:kube-systemrules:#AllowDashboardtocreate'kubernetes-dashboard-key-holder'secret.-apiGroups:[""]resources:["secrets"]verbs:["create"]#AllowDashboardtocreate'kubernetes-dashboard-settings'configmap.-apiGroups:[""]resources:["configmaps"]verbs:["create"]#AllowDashboardtoget,updateanddeleteDashboardexclusivesecrets.-apiGroups:[""]resources:["secrets"]resourceNames:["kubernetes-dashboard-key-holder","kubernetes-dashboard-certs"]verbs:["get","update","delete"]#AllowDashboardtogetandupdate'kubernetes-dashboard-settings'configmap.-apiGroups:[""]resources:["configmaps"]resourceNames:["kubernetes-dashboard-settings"]verbs:["get","update"]#AllowDashboardtogetmetricsfromheapster.-apiGroups:[""]resources:["services"]resourceNames:["heapster"]verbs:["proxy"]-apiGroups:[""]resources:["services/proxy"]resourceNames:["heapster","http:heapster:","https:heapster:"]verbs:["get"]---apiVersion:rbac.authorization.k8s.io/v1kind:RoleBindingmetadata:name:kubernetes-dashboard-minimalnamespace:kube-systemroleRef:apiGroup:rbac.authorization.k8s.iokind:Rolename:kubernetes-dashboard-minimalsubjects:-kind:ServiceAccountname:kubernetes-dashboardnamespace:kube-system---kind:ClusterRoleBindingapiVersion:rbac.authorization.k8s.io/v1beta1metadata:name:kubernetes-dashboardsubjects:-kind:ServiceAccountname:kubernetes-dashboardnamespace:kube-systemroleRef:kind:ClusterRolename:cluster-adminapiGroup:rbac.authorization.k8s.io---#-------------------DashboardDeployment-------------------#kind:DeploymentapiVersion:apps/v1beta2metadata:labels:k8s-app:kubernetes-dashboardname:kubernetes-dashboardnamespace:kube-systemspec:replicas:1revisionHistoryLimit:10selector:matchLabels:k8s-app:kubernetes-dashboardtemplate:metadata:labels:k8s-app:kubernetes-dashboardspec:serviceAccountName:kubernetes-dashboard-admincontainers:-name:kubernetes-dashboardimage:k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1ports:-containerPort:9090protocol:TCPargs:#---auto-generate-certificates#UncommentthefollowinglinetomanuallyspecifyKubernetesAPIserverHost#Ifnotspecified,DashboardwillattempttoautodiscovertheAPIserverandconnect#toit.Uncommentonlyifthedefaultdoesnotwork.#---apiserver-host=http://10.0.1.168:8080volumeMounts:-name:kubernetes-dashboard-certsmountPath:/certs#Createon-diskvolumetostoreexeclogs-mountPath:/tmpname:tmp-volumelivenessProbe:httpGet:scheme:HTTPpath:/port:9090initialDelaySeconds:30timeoutSeconds:30volumes:-name:kubernetes-dashboard-certssecret:secretName:kubernetes-dashboard-certs-name:tmp-volumeemptyDir:{}serviceAccountName:kubernetes-dashboard#CommentthefollowingtolerationsifDashboardmustnotbedeployedonmastertolerations:-key:node-role.kubernetes.io/mastereffect:NoSchedule---#-------------------DashboardService-------------------#kind:ServiceapiVersion:v1metadata:labels:k8s-app:kubernetes-dashboardname:kubernetes-dashboardnamespace:kube-systemspec:ports:-port:9090targetPort:9090selector:k8s-app:kubernetes-dashboard#------------------------------------------------------------kind:ServiceapiVersion:v1metadata:labels:k8s-app:kubernetes-dashboardname:kubernetes-dashboard-externalnamespace:kube-systemspec:ports:-port:9090targetPort:9090nodePort:30090type:NodePortselector:k8s-app:kubernetes-dashboard创建这个yaml文件[root@k8s-master01work]#kubectlcreate-fkubernetes-dashboard.yaml稍微等一会儿,查看kubernetes-dashboard的pod创建情况(如下可知,该pod落在了k8s-node03节点上,即172.16.60.246)[root@k8s-master01work]#kubectlgetpods-nkube-system-owide|grep"kubernetes-dashboard"kubernetes-dashboard-7976c5cb9c-q7z2w1/1Running010m172.30.72.6k8s-node03<none><none>[root@k8s-master01work]#kubectlgetsvc-nkube-system|grep"kubernetes-dashboard"kubernetes-dashboard-externalNodePort10.254.227.142<none>9090:30090/TCP10m

</div> <div class="zixun-tj-product adv-bottom"></div> </div> </div> <div class="prve-next-news">Kubernetes集群插件怎么部署的详细内容,希望对您有所帮助,信息来源于网络。